I’ve attended the Gay Pride Parade in New York on more than one occasion. The event itself holds a special significance for many people who have been close to me and I’m always happy to see them happy, even if parades normally aren’t my cup of tea. That said, I have found certain aspects of the event a little peculiar, at least with regard to its execution. I had this to say about it some years ago:

One could be left wondering what a straight pride parade would even look like anyway, and admittedly, I have no idea. Of course, if I didn’t already know what gay pride parades do look like, I don’t know why I would assume they would be populated with mostly naked men and rainbows, especially if the goal is fostering acceptance and rejection of bigotry. The two don’t seem to have any real connection, as evidenced by black civil rights activists not marching mostly naked for the rights afforded to whites, and suffragettes not holding any marches while clad in assless leather chaps.

Colorful exaggerations aside, there’s something very noteworthy to think about here. While it might seem normal for gay pride events to be rather flamboyant affairs, there need not be any displays of promiscuous sexuality inherent to the event. That is, if people were celebrating a straight, monogamous relationship style with a parade, I don’t think we’d see many people dressing down or, in some cases, going without clothing at all. I imagine the event would be substantially more modest as, well, most other parts of life tend to be.

“From: Straight Pride Boat Ride, 2016″

The relevance of this point comes when one begins to consider what types of people in the world are most opposed to homosexual lifestyles and, accordingly, pose the largest obstacles to things like marriage and adoption rights for the gay community. When considering who those people are, the most common idea that will no doubt spring to many minds are the conservative, religious type (likely because that would be the correct answer). But why are such people most likely to condemn homosexuality on a moral level? A tempting answer would be to make reference to some religious texts condemning homosexuality, but that’s a rather circular explanation: religious people condemn homosexuality because they believe in a doctrine that condemns homosexuality. It’s also not entirely complete, as many parts of the doctrine are only selectively followed in other contexts. We’re also left wondering why those doctrines condemned homosexuality in the first place, placing us back at square one.

A more detailed picture begins to emerge when you consider what predicts religiosity in the first place; what type of person is most drawn to such groups. As it turns out, one of the better predictors of who ends up associating themselves with religious groups and who does not is sexual strategy. Those who are more inclined to monogamy (or, more precisely, opposed to promiscuity) tend to be more religious, and this holds across cultures and religions. By contrast, religiosity is not well predicted by general cooperative morals or behavior. It would be remarkable if religions from all parts of the world ended up stumbling upon a common distaste for promiscuity if it was not inherently tied to religious belief. Something about sexual behavior is uniquely predictive of religiosity, which ought to be strange when you consider that one’s sexual behavior should have little bearing on whether a deity (or several deities) exist. It has even been proposed that religious groups themselves function to support particular kinds of relatively monogamous mating arrangements. In that light, religious groups can be viewed as a support structure for monogamous couples who plan on having many children.

With that perspective in mind, the religious opposition to promiscuity becomes substantially clearer: promiscuity makes monogamous arrangements more difficult to sustain, and vice versa. If you plan on having a lot of children, men face risks of cuckoldry (raising a child that was unknowingly sired by another man) while women face risks of abandonment (if their husband runs off with another woman, leaving her to care for the children alone). As such, having lots of promiscuous men and women around who might lure your partner away or stop them from investing in you in the first place does the monogamous type no favors. In order to support their more monogamous lifestyle, then, these people begin to punish those who engage in promiscuous behaviors to make such strategies more costly to engage in and, accordingly, more rare.

The first punishment for promiscuity – spankings – didn’t have the intended effect

While homosexual individuals themselves don’t exactly pose direct risks to heterosexual, long-term mating couples, they may nevertheless be condemned to the extent that the gay community is viewed as promiscuous. There are a few possible reasons for that outcome to obtain. Perhaps homosexuals are viewed as supporting and encouraging promiscuity, and to let that go unpunished would start other people down a path towards promiscuity (similar to how recreational drug use is also condemned by the long-term maters). Perhaps all sorts of non-traditional sexual behavior is condemned by the conservative groups and homosexuality just ends up condemned as a byproduct. Whatever the explanation for this condemnation, however, a key prediction falls out of this framework: moral condemnation of homosexuality ought to increase to the extent they are viewed as promiscuous and decrease to the extent they are viewed as monogamous. As homosexual groups (particularly men) are viewed as more promiscuous than their heterosexual counterparts (because they are, from every data set I’ve seen), this might help explain the condemnation and, in turn, do something about it.

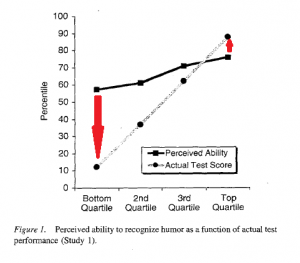

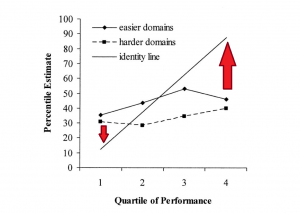

This is exactly what a new paper by Pinsof & Haselton (2017) sought to test. The pair recruited approximately 1,000 participants from online. The participants read either an article that reported gay men had more partners than straight ones, or an article that reported gay men and straight had the same number of partners. Participants were also asked about their own perceptions of how promiscuous gay men are, their stance on gay rights, and on their own mating orientation (whether they thought short-term sexual encounters were acceptable or not).

As expected, there was an appreciable relationship between one’s mating orientation and one’s support of gay rights: the more long-term their mating strategy, the less supportive of gay rights they were (r = -0.4). That said, despite men being more accepting of promiscuity than women, there was no relationship between gender and support for gay rights. Crucially, an interaction was observed between experimental condition and mating orientation when it came to predicting support for gay rights: Those who were particularly accepting of short-term mating arrangements opposed gay rights very little regardless of which article they had read regarding gay men’s sexual behavior (Ms = approximately 2.25 in both groups, on a scale from 1-7). However, among those who were relatively less accepting of short-term mating, there was a significant difference between the two conditions: when reading an article about how gay men were more promiscuous, opposition to gay rights was higher (M = 4.25) than it was in the condition where they read about how gay men were equally promiscuous (M = 3.5).

Acceptable

By manipulating perceptions of whether gay men were promiscuous, the researchers were also able to manipulate opposition to gay rights. So, if one is interested in achieving greater support for the homosexual community, that’s important information to bear in mind. It also brings me back to the initial point I mentioned about the Gay Pride events I have attended. While I was there, I couldn’t help but wonder whether the atmosphere of sexual promiscuity surrounding the parade would be off-putting to a substantial percentage of the population (even within the gay community), and it seems that intuition was borne out by the present data. The Gay Pride events go beyond a simple celebration and acceptance of homosexuality at points, as it is frequently coupled with sexual promiscuity. It seems that many people might have less of a problem with the former issue if the latter one wasn’t tagging along.

Then again, perhaps promiscuity will be a bit more closely linked with the homosexual community in general, given that children do not result from such unions (making them less costly to engage in) and because heterosexual men are usually only as promiscuous as women allow them to be. If women were just as interested in casual sex as men, there would likely be a lot more casual sex going on. When men are attracted to other men, however, the barriers that usually holds promiscuity in check (children and women’s desires) are much weaker. That does raise the interesting question of whether a different pattern holds for lesbian relationships (which are less promiscuous than gay ones), and it’s certainly one worth pursuing.

References: Pinsof, D. & Haselton, M. (2017). The effect of the promiscuity stereotype on opposition to gay rights. PLoS ONE 12(7): e0178534. https://doi.org/10.1371/journal.pone.0178534